- Blog

- Online spotify music downloader mp3

- Download onedrive osx pro

- Best cheap lip gloss for dark skin

- Mediatek 802-11n wireless lan card slow

- How to install apache spark on windows 10

- Free product key for office professional plus 2013

- Goland pricing

- Hex works with shrines

- Deus ex mankind divided trainer inventory frozen

- #How to install apache spark on windows 10 how to

- #How to install apache spark on windows 10 zip file

- #How to install apache spark on windows 10 update

Type in expressions to have them evaluated. Using Scala version 2.12.10 (OpenJDK 64-Bit Server VM, Java 11.0.8) Spark context available as 'sc' (master = local, app id = local-1599706095232). To adjust logging level use sc.setLogLevel(newLevel). Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties using builtin-java classes where applicable WARNING: All illegal access operations will be denied in a future releaseĢ0/09/09 22:48:09 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform. WARNING: Use -illegal-access=warn to enable warnings of further illegal reflective access operations WARNING: Please consider reporting this to the maintainers of .Platform

WARNING: Illegal reflective access by .Platform (file:/opt/spark/jars/spark-unsafe_2.12-3.0.1.jar) to constructor (long,int) If you want more comfortable then install IntelliJ IDEA, Eclipse for more import packages, and try to write code simply.WARNING: An illegal reflective access operation has occurred

#How to install apache spark on windows 10 update

Once you update the path then open a new command prompt, type the spark-shell will get spark prompt for developing mode.

#How to install apache spark on windows 10 zip file

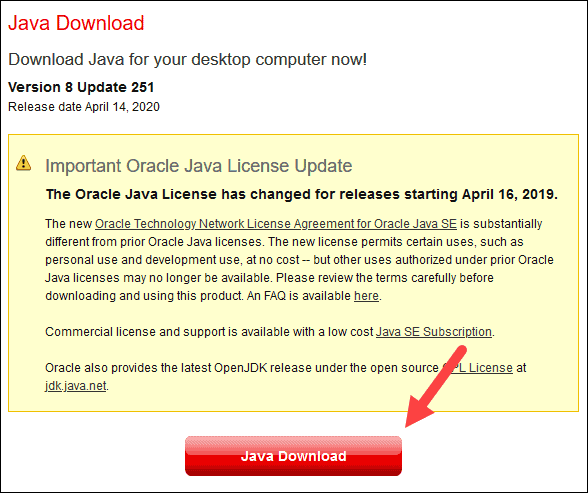

The Spark zip file once downloaded then extract that file and set up the “SPARK_HOME” in the environment variables and update the Path also. For Spark installation, Java is pre-requisite because it is developed by Java code. In a single node cluster, Spark installation is enough for Spark developers. In Big Data environment Spark is used for large data processing with a cache-based access system. Some servers also need to Spark like Sqoop, Hive, and Map Reduce so Hadoop is mandatory. Basically Apache Spark no needs to install on top of Hadoop but needs to Hadoop Distributed File System for the large data sets.

#How to install apache spark on windows 10 how to

Summary: The above steps are showing how to set up Spark on Windows/ Windows 10 operating systems with simple steps for Spark developers and Hadoop Developers/Admins.

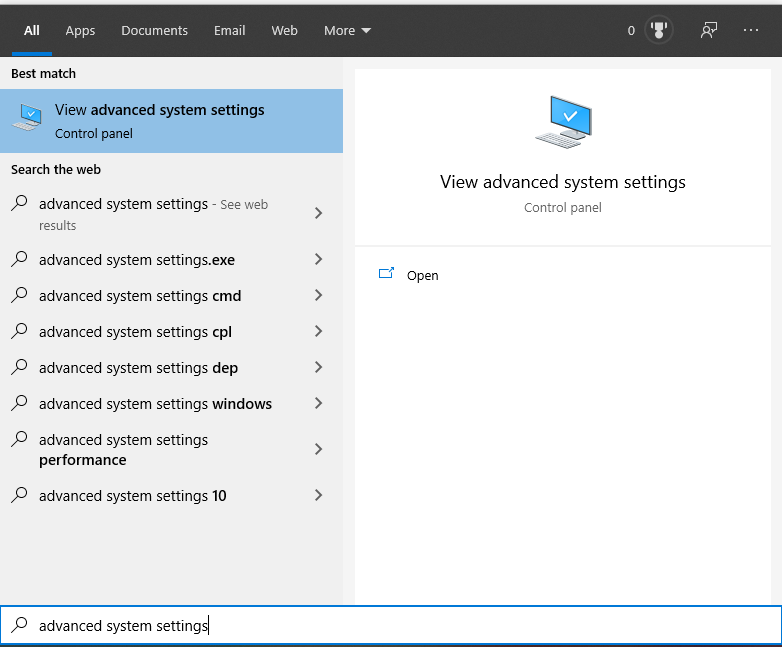

In case if you do not get the Spark-shell then restart your machine. Step 7: Open new Command prompt then type below command: spark-shellĬongratulations! Spark successfully completed on Windows 10 operating system. To install Spark, run the following command from the command line or from. Then click on the “OK” button and save it everything. Chocolatey is software management automation for Windows that wraps installers. Step 6: Then update the “SPARK_HOME” Path in the “Environment_Variable”. Extract the Spark zip file by using WinRar. Step 5: Download the Spark latest version zip from Spark official website. Step 4: In System Variable give Variable Name like “HADOOP_HOME” after that give Variable value like “C:/wintuils” path then click on the “OK” button. Step 3: Click on “Environment Variables” to update the Hadoop_Home Path

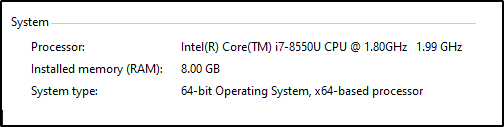

Step 2: Next step update the environment variable, open the “Advanced system setting: Step 1: Download winutils.exe Windows 10 operating system from git hub below link:Īfter downloading the Wintulins file and create your own convenient. How To Install Apache Spark On Windows 10: Some of the professionals’ Spark installation on Linuxbut some professionals need to install Spark on Windows 10 for their comfort. In this article, we will explain the Apache Spark installation on Windows 10 with simple steps by using the “Wintuils.exe” file.

- Blog

- Online spotify music downloader mp3

- Download onedrive osx pro

- Best cheap lip gloss for dark skin

- Mediatek 802-11n wireless lan card slow

- How to install apache spark on windows 10

- Free product key for office professional plus 2013

- Goland pricing

- Hex works with shrines

- Deus ex mankind divided trainer inventory frozen